CAG: A Streamlined Approach to AI Knowledge Tasks

Published on January 14, 2025

🤖 Get AI Summary of this Post:

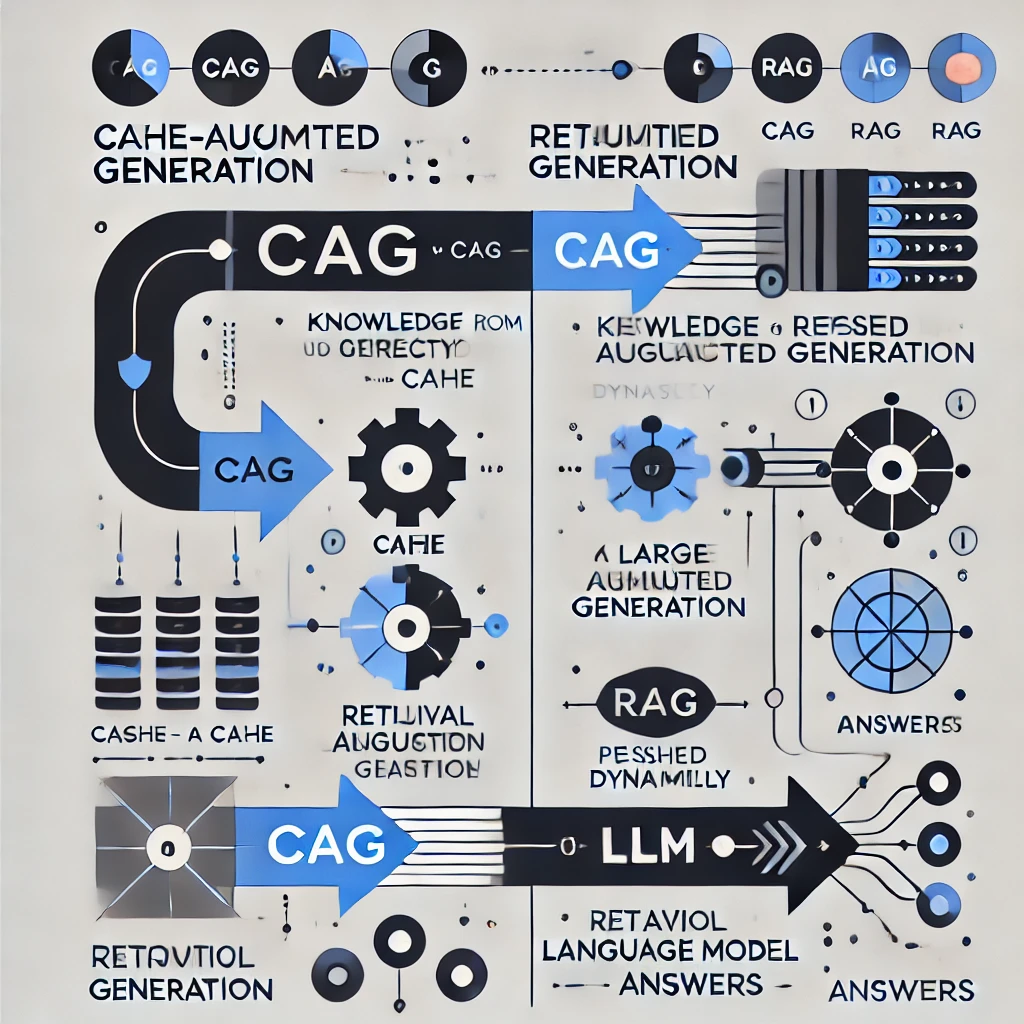

Cache-Augmented Generation (CAG) is a revolutionary method designed to optimize knowledge-intensive AI tasks by bypassing the traditional Retrieval-Augmented Generation (RAG) pipeline. This new paradigm leverages preloaded knowledge and precomputed key-value (KV) caches, eliminating the need for real-time retrieval during inference.

The research, conducted by Brian J. Chan and his team, highlights how CAG addresses significant challenges in RAG, including retrieval latency, potential errors in document selection, and increased system complexity. By utilizing long-context large language models (LLMs) with preloaded resources, CAG enables streamlined, efficient, and accurate processing of knowledge tasks.

Key Insights from the Research

1. Eliminating Retrieval Bottlenecks: CAG replaces dynamic retrieval with preloaded knowledge, drastically reducing inference times and errors associated with retrieval pipelines.

2. Leveraging Long-Context LLMs: Modern LLMs, such as Llama 3.1, are equipped to handle extended context windows, allowing for holistic reasoning over large datasets without real-time retrieval.

3. Simplified Architecture: By precomputing KV caches, CAG reduces the complexity of system architecture, making it easier to maintain and scale for various applications.

4. Benchmark Performance: Experiments on datasets like SQuAD and HotPotQA show that CAG not only matches but often outperforms RAG in both efficiency and accuracy.

"CAG introduces a retrieval-free paradigm that ensures faster, more reliable, and contextually rich AI outputs, redefining the landscape of knowledge-intensive tasks."

Methodology and Implementation

The CAG framework operates in three phases: external knowledge preloading, inference, and cache reset. Preloading involves formatting and encoding documents into a KV cache, which is then stored for reuse. During inference, the preloaded cache provides the necessary context, eliminating real-time retrieval. The cache reset mechanism ensures sustained performance across multiple sessions by efficiently managing memory.

Performance tests demonstrate the substantial advantages of CAG over traditional RAG systems. For instance, in scenarios with small to medium knowledge bases, CAG achieves higher accuracy with significantly reduced generation times.

Research Implications

The findings suggest that CAG is particularly suited for applications where the knowledge base is constrained and manageable. By preloading all necessary information into the model’s extended context, CAG avoids the pitfalls of retrieval errors and latency, making it an ideal choice for use cases such as document summarization, multi-turn dialogue systems, and complex question answering.

To delve deeper into the groundbreaking research behind Cache-Augmented Generation, explore the full paper on arXiv.